Aestheticizing Audio

Creating audio visualizations to convey the feeling behind music.

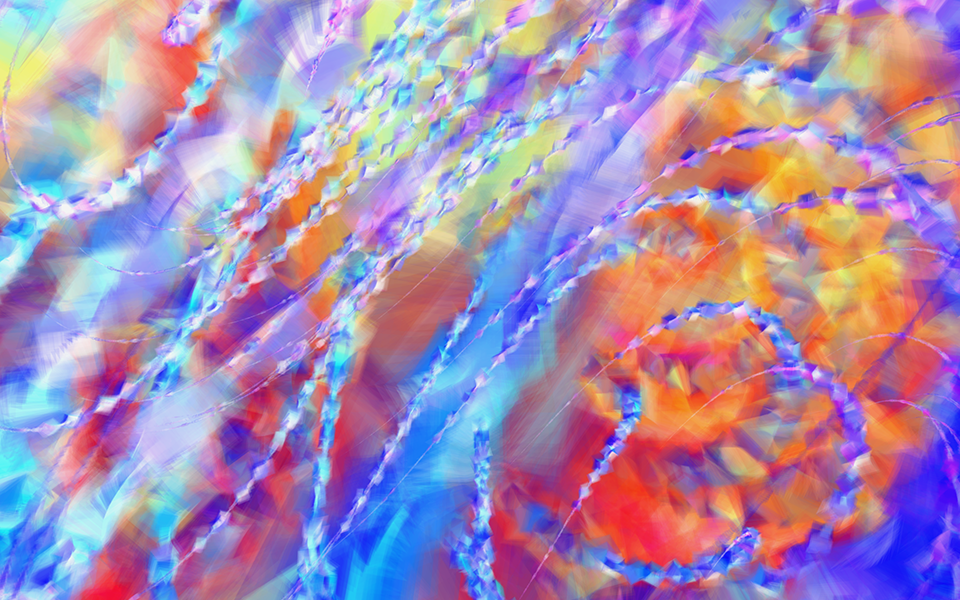

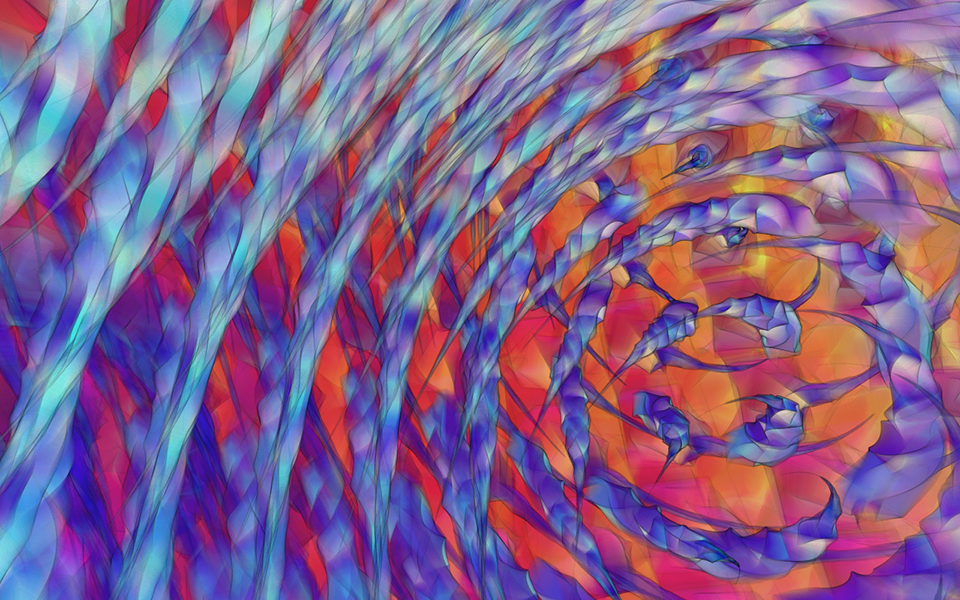

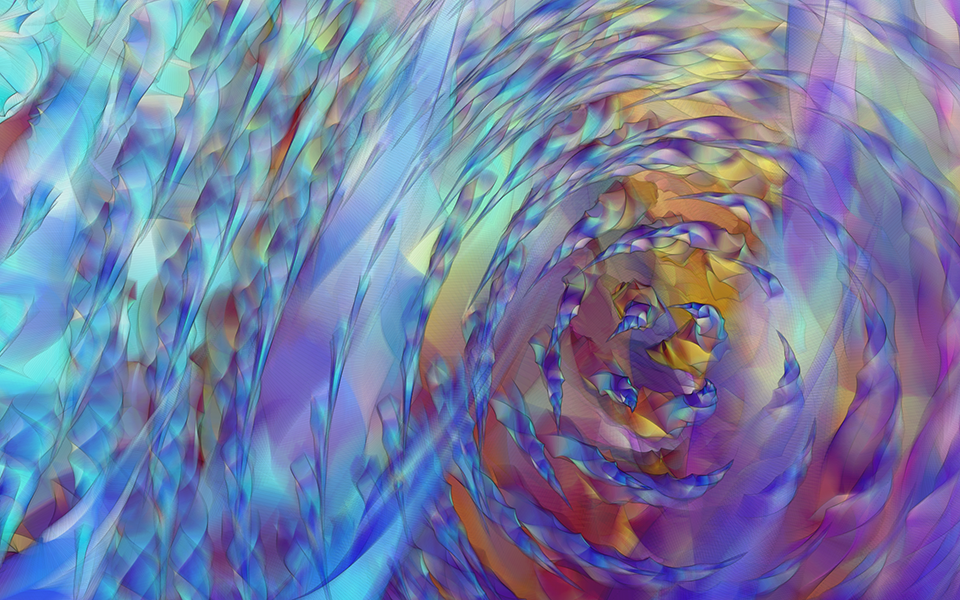

Above; Nest + Brambles - Amroth (full video). Recorded from projected image on wall to mimic museum installation.

Github

September - November, 2017

This project was created for the Gregg Museum of Art and Design in Raleigh, North Carolina. Starting with the questions I usually ask in computational play, I wanted to explore how visuals could represent the feeling behind music. For my investigation, the final result was a computer program written in Processing. Taking audio as input, the code generates visuals by mapping audio metadata like the amplitude and frequency data, and the current time in the song. The final result was shown on a projector in the museum, so I have included a video recording of one of the songs playing on my projector at home at the top of the page.

Explorations and findings

The basic structure and idea of the piece was completed pretty early, and most of the project time consisted of tweaking the variables controlling the "look" of the piece to reflect the mood of the music being played.

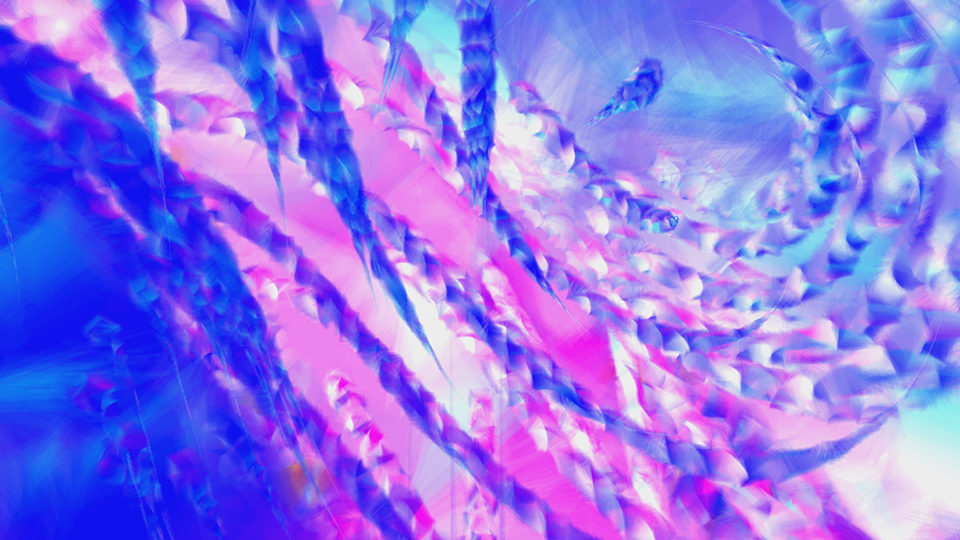

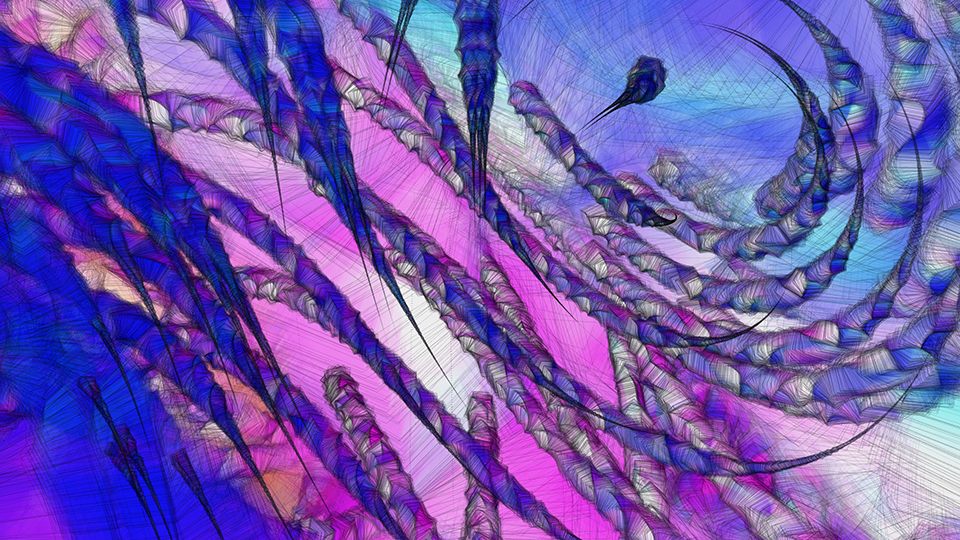

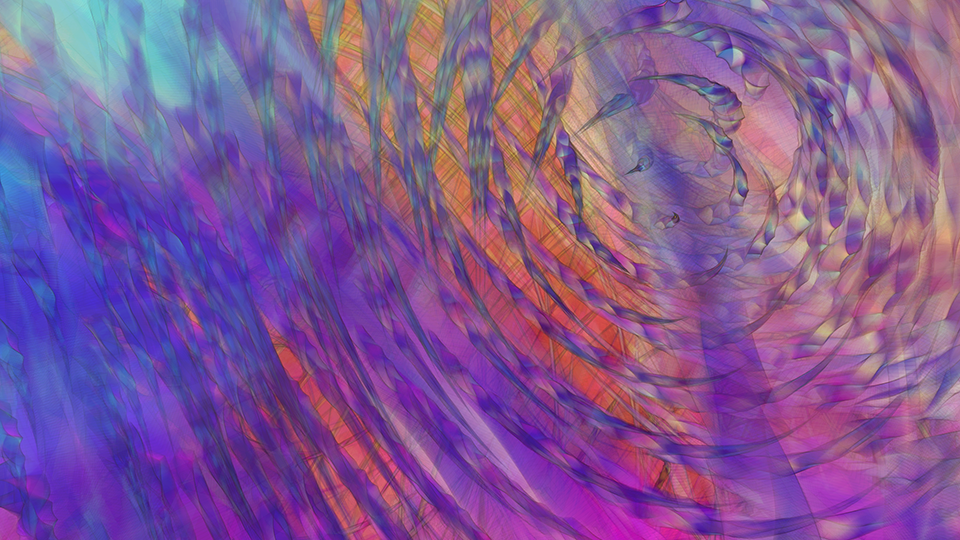

The visual variables I played with were mainly scale, color, strokes, transparency, and visual transience. Manipulating these variables, the balance between audio and visuals had to be maintained. For example, setting the scale too high would result in the visuals overpowering the music, since variations between various passages might not be visible on the piece as it played. On the other hand, highly transient visuals would result in music overpowering the visuals--one would be able to see even nuanced changes in the musical passage but it might distract from and / or wash out previously established visuals.

In short, in my preliminary investigation I find the following (loose) relationships between the variables I explored and the attributes of music they contributed to (+) or detracted from (-):

- Scale: +mood, -history

- Color: +mood

- Stroke: -dreaminess, +focus

- Transparency: +dreaminess, +mood, +history

- Attack: -history, -dreaminess

I did not account for several other variables that I could have looked at, the most obvious of which was the shapes of objects in the visualization. I went with cubes because of their directional disambiguity despite nice symmetry. I could achieve nice flowing lines and generate the illusion of three-dimensional objects using the affordances provided by the cube. However, I can see similar opportunities with other shapes as well, but research into the effects of the shapes on the feeling was outside the scope of this project.

I found it very hard to write an algorithm that would generate convincing visuals for music of different genres. For instance, the music that I had decided to play at the show was mostly ambient, repetitive, atmospheric music. In one word, I would describe it as "dreamy", and I tailored my visuals for this kind of music. The same algorithm will not work as well for, say, heavy metal music. There are other differences in genres that have to be kept in mind as well--some genres rely on a contrast in the type of sound while keeing the level relatively equal (eg heavy metal), while others rely on a contrast + variance in sound levels rather than type of sound to create their feeling (eg ambient, spaced-out music). Since I was focusing on the latter, I made heavy use of sound levels in controlling my visuals, for example.

Further work + reading

What started as a fun experiment with audio opened up realy interesting questions about the meaning of moods and feelings, and their associations with visual elements. My investigations for this project were not very deep, but I did arrive at some interesting framework of ideas for implementing visuals. I can easily see the scope of this project renewed to explore the relationships between audio and visuals and how to marry them together. Moving forward to an age where immersive media is increasingly important, the importance of audio in interface design is very high, and this sort of investigation could prove invaluable for the development of a immersive audio-visual system.

An interesting investigation could be done using modern technological advancements like machine learning. As noted, my algorithm does not account for wildly different musical genres. Could an algorithm interpret the "feeling" behind music, and create corresponding visuals? This would entail operationalizing variables in both music and visuals--is there a relationship between observable objective metadata from audio files containing music, and the subjective interpretation of this music by people? Is there a similar relationship for the visual medium? If so, can an algorithm be taught this relationship through a neural network?

Scott Reinhard's thesis on interaction gestalt principles might be a worthwhile read for the various interesting findings in interactions vs "feelings" conveyed by properties of those interactions.